How to do data profiling in Incorta

Sometimes we need to better upstanding about data, we can do data profiling using Spark Python in Incorta.

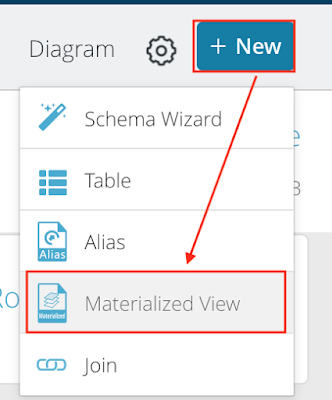

Firstly, Add a new Materialized View in Incorta. Select Spark Python.

Then, I have two methods do data profiling.

Method 1:

Using df.describe()

This function can provide min, max, count, mean, stddev. But only for data types of string and number.

Method 2:

Calculate each metric ourselves.

Comments

Post a Comment